Memcached on Azure Brief

Dpsv5 Virtual Machines Powered by Ampere Altra Processors

Overview

Ampere® Altra® processors are designed from the ground up to deliver exceptional performance for Cloud Native applications such as Memcached. With an innovative architecture that delivers high performance, linear scalability, and amazing energy efficiency, Ampere Altra allows workloads to run in a predictable manner with minimal variance under increasing loads. This enables industry leading performance/watt and a smaller carbon footprint for real-world workloads such as Memcached.

Microsoft offers a comprehensive line of Azure Virtual Machines featuring the Ampere Altra Cloud Native processor that can run a diverse and broad set of scale-out workloads such as web servers, open-source databases, in-memory applications, big data analytics, gaming, media, and more. The Dpsv5 VMs are general-purpose VMs that provide 2 GB of memory per vCPU and a combination of vCPUs, memory, and local storage to cost-effectively run workloads that do not require larger amounts of RAM per vCPU. The Epsv5 VMs are memory-optimized VMs that provide 4 GB of memory per vCPU, which can benefit memory-intensive workloads, including open-source databases, in-memory caching applications, gaming, and data analytics engines.

Memcached is an open- source, in-memory key-value data store that is typically used for caching small chunks of arbitrary data (strings, objects) from the results of database and API calls. Due to its in-memory nature, Memcached is intended for use in speeding up dynamic web applications by caching data and objects in RAM and alleviating database lookups. It was one of the influential caching stores in the cloud and continues to be popular today.

In this workload brief, we ran Memcached on the Azure Dpsv5 VMs powered by Ampere Altra processors, the Intel® Ice Lake-based Dsv5 VMs, and the AMD Milan-based Dasv5 VMs, and measured the throughput and latencies on each of these offerings.

Fig. 1

Results and Key Findings

For large-scale cloud deployments, performance and price-performance are important metrics.

As seen in Figure 2, the Ampere Altra-based D16ps v5 VM outperformed the D16s v5 VM by 4% and the D16as v5 by 28%.

Table-1 in configuration section shows hourly cost of D16ps v5, D16as v5, and D16s v5. After Taking VM hourly price into account, we observed that the D16ps v5 VM has a 30% price-performance advantage over the D16s v5 and a 43% price-performance advantage over the D16as v5 VM as shown in Figure 3.

Benchmarking Configuration

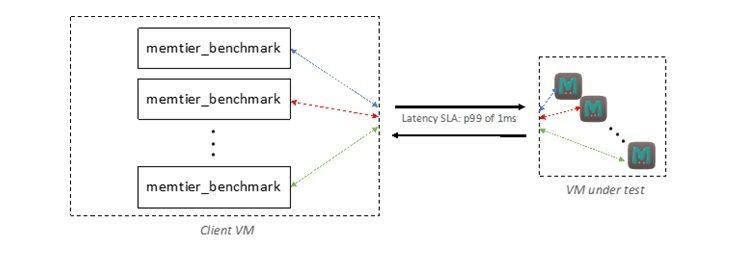

We have used memtier_benchmark (developed by Redis Labs) as a load generator for benchmarking Memcached. Each test was configured to run with multiple threads, multiple clients per thread, and with pipelining enabled.

We recommend compiling Memcached-server with GCC (GNU Compiler Collection) 10.2 or newer as newer compilers have made significant progress towards generating optimized code that can improve performance for AArch64 applications.

We used Ubuntu 20.04.4 LTS OS and 5.13.0-1017-azure kernel with Memcached 1.6.9 compiled with GCC 10.2 for our tests. We compared Microsoft Azure D16ps v5, D16sv 5, and D16asv5 VMs. For each of the tests, we used similar clients to generate requests to Memcached-server.

Since it is realistic to measure throughput under a specified Service Level Agreement (SLA), we have used a 99th percentile latency (p.99) of 1 ms. This ensures that 99% of the requests have a worst-case response time of 1 ms.

The test ran for 2 minutes with a 1:9 set:get ratio (1 key/value write and 9 key/value read) and 32 bytes payload, which is common for in-memory caches. We initially used an appropriate number of clients and threads/client to load one instance of Memcached-server, while ensuring the p.99 latency was at most 1 ms. Pipelining feature in Memcached allows client to pack multiple requests into one single request packet which can reduce packet processing overhead. This feature can dramatically reduce the number of interactions between client and Memcached-server which benefits response times.

Next, we successively increased the number of memtier_benchmark threads, clients of each thread and pipeline depth in sequence till these combinations violated the p.99 latency SLA. The maximum throughput qualified with 1 ms p.99 SLA was used as the primary performance metric, see Table-1.

The following configurations and settings were used while benchmarking Memcached on Azure VMs.

| Standard D16ps v5 (Ampere Altra) | Standard D16as v5 (AMD Milan) | Standard D16s v5 (Intel Ice Lake) | |

|---|---|---|---|

| OS | 5.13.0-1021-azure | 5.13.0-1021-azure | 5.13.0-1021-azure |

| Kernel | 5.13.0-1021-azure | 5.13.0-1021-azure | 5.13.0-1021-azure |

| vCPUs | 16 | 16 | 16 |

| Memory | 64GB | 64GB | 64GB |

| glibc version | 2.31 | 2.31 | 2.31 |

| Memcached version | 1.6.9 | 1.6.9 | 1.6.9 |

| Memcached config | 4 instances each with 4 memcached threads | 4 instances each with 4 memcached threads | 4 instances each with 4 memcached threads |

| Memtier version | 1.3.0 | 1.3.0 | 1.3.0 |

| Memtier config | " --memtier_protocol=memcache_binary --memtier_data_size=32 --memtier_ratio='1:9' --memtier_key_pattern='R:R' --memcached_num_threads=16 --memtier_clients="$c" --memtier_threads="$t" --memtier_pipeline="$p" " | " --memtier_protocol=memcache_binary --memtier_data_size=32 --memtier_ratio='1:9' --memtier_key_pattern='R:R' --memcached_num_threads=16 --memtier_clients="$c" --memtier_threads="$t" --memtier_pipeline="$p" " | " --memtier_protocol=memcache_binary --memtier_data_size=32 --memtier_ratio='1:9' --memtier_key_pattern='R:R' --memcached_num_threads=16 --memtier_clients="$c" --memtier_threads="$t" --memtier_pipeline="$p" " |

| Clients | D32psv5 | D32sv5 | D32sv5 |

| Client config | t=8, c=2, p=50 | t=24, c=2, p=25 | t=16, c=5, p=25 |

| Hourly cost (on-demand) | 0.616 | 0.688 | 0.768 |

Key Findings and Conclusions

Memcached was one of the first fast, open-source in-memory key-value stores and is still used at scale in large production deployments. On Cloud Native applications such as Memcached, the Ampere Altra-based Dpsv5 VMs handily outperform the legacy x86 offerings - up to 28% performance and 43% price-performance advantage. For cloud architects, choosing the Ampere Altra-based Azure Dpsv5 VMs leads to better performance and price-performance while reducing their carbon footprint.

For more information about Azure Virtual Machines with Ampere Altra Arm-based processors, visit the Azure blog.

Footnotes

All data and information contained herein is for informational purposes only and Ampere reserves the right to change it without notice. This document may contain technical inaccuracies, omissions and typographical errors, and Ampere is under no obligation to update or correct this information. Ampere makes no representations or warranties of any kind, including but not limited to express or implied guarantees of noninfringement, merchantability, or fitness for a particular purpose, and assumes no liability of any kind. All information is provided “AS IS.” This document is not an offer or a binding commitment by Ampere. Use of the products contemplated herein requires the subsequent negotiation and execution of a definitive agreement or is subject to Ampere’s Terms and Conditions for the Sale of Goods.

System configurations, components, software versions, and testing environments that differ from those used in Ampere’s tests may result in different measurements than those obtained by Ampere.

Price performance was calculated using Microsoft's Virtual Machines Pricing, in September of 2022. Refer to individual tests for more information.

©2022 Ampere Computing. All Rights Reserved. Ampere, Ampere Computing, Altra and the ‘A’ logo are all registered trademarks or trademarks of Ampere Computing. Arm is a registered trademark of Arm Limited (or its subsidiaries). All other product names used in this publication are for identification purposes only and may be trademarks of their respective companies.

Ampere Computing® / 4655 Great America Parkway, Suite 601 / Santa Clara, CA 95054 / amperecomputing.com