Ampere AI

Ampere AI

The best GPU-Free alternative for AI Inferencing workloads

Are you a developer? > Power Your AI

Are you a developer? > Power Your AI

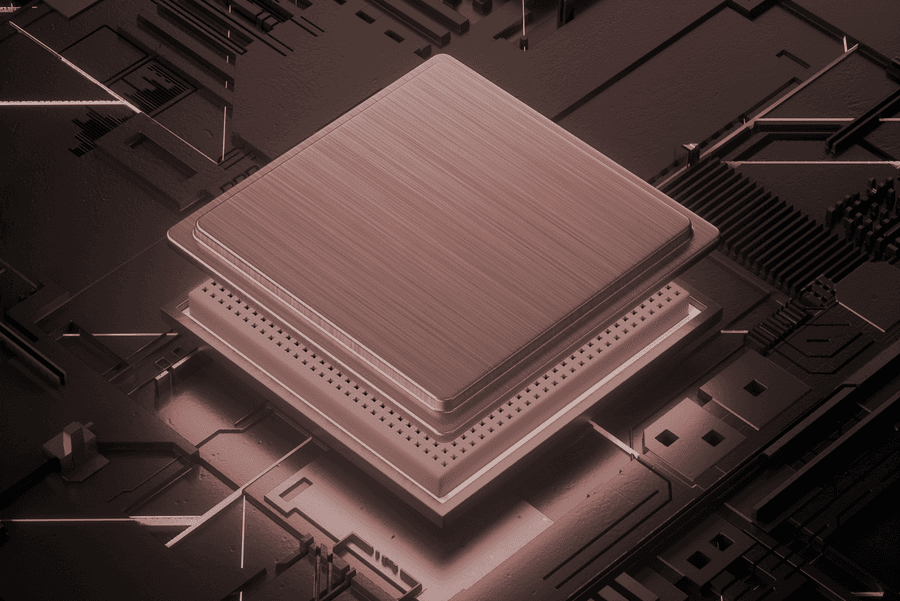

Unlock AI Inference Efficiency with Ampere Cloud Native Processors

Unlock AI Inference Efficiency with Ampere Cloud Native Processors

Ampere Cloud Native Processors with Ampere Optimized AI Frameworks are uniquely positioned to offer GPU-Free AI Inference at performance levels that meet client needs of all AI functions be it generative AI, NLP, recommender engines, or computer vision.

GPU-Free AI Inference Servers

GPU-Free AI Inference Servers

Key Benefits

Key Benefits

GPU-Free

- Unmatched price-performance for a variety of ML workloads

- Top-of-the-line energy efficiency

- Quick and seamless provisioning with instant availability

AI Efficiency

Reduce power consumption without sacrificing performance and build a sustainable future.

> Computer Vision

> Natural Language Processing

> Recommender Engines

Right-Sizing AI Compute

FP16 vs FP32

FP16 data format boosts AI inference performance.

> Computer Vision

> Natural Language Processing

> Recommender Engines

Created At : April 10th 2024, 4:03:59 pm

Last Updated At : July 8th 2024, 8:28:11 pm

| | | | | |

© 2024 Ampere Computing LLC. All rights reserved. Ampere, Altra and the A and Ampere logos are registered trademarks or trademarks of Ampere Computing.

This site is running on Ampere Altra Processors.